FastVLM: In the world of AI, there’s a constant race to make models faster, more efficient, and smarter. While many powerful AI models run on massive cloud servers, the true potential of AI lies in its ability to operate directly on our devices our smartphones, laptops, and tablets. This is the domain of on-device AI, where privacy is paramount and real-time performance is a necessity.

FastVLM, a groundbreaking Vision Language Model (VLM) developed by Apple Machine Learning Research, is a significant leap forward in this space. FastVLM is designed to process high-resolution images and videos with unprecedented speed and efficiency, all while running directly on Apple Silicon. This means it can perform complex tasks like describing what your camera sees or answering questions about an image, all without sending your data to the cloud.

This in-depth guide will take you on a deep dive into FastVLM. We will explore its innovative architecture, understand how it achieves its incredible speed, compare it with other leading models, and discuss what this breakthrough means for the future of on-device AI and user privacy.

What is FastVLM? A Vision-Language Model Redefined

FastVLM is a family of Vision Language Models (VLMs). A VLM is an AI system that can understand both visual content (images, videos) and text. It can answer questions about what it sees, generate descriptions of a scene, or perform tasks that require both visual and textual understanding.

What sets FastVLM apart is its relentless focus on efficiency. It is built to address a major challenge in VLMs: the “resolution-latency trade-off.” Simply put, when you process a high-resolution image, you get more detail, but the AI model slows down dramatically. FastVLM solves this problem with a novel approach, making it one of the fastest and most efficient VLMs available for consumer devices.

Key Goals of FastVLM’s Design

Apple developed FastVLM with three primary goals in mind:

- Speed and Efficiency: To perform complex visual tasks in real-time.

- On-Device Operation: To run locally on devices like iPhones, iPads, and MacBooks, ensuring user privacy and low latency.

- High-Resolution Capability: To understand intricate details in high-resolution images without a significant performance drop.

By achieving these goals, FastVLM unlocks new possibilities for AI applications, from real-time accessibility assistants to advanced image editing tools.

The Technology Behind the Speed: A Look at FastVLM’s Architecture

FastVLM’s remarkable performance is the result of a smart and innovative architectural design. It’s not just about a faster processor; it’s about a smarter way to handle visual data.

1. The FastViT-HD Vision Encoder

The secret to FastVLM’s efficiency lies in its revolutionary visual encoder, called FastViT-HD. This is a hybrid convolutional-transformer architecture.

- What it does: The vision encoder’s job is to “read” an image and convert it into a set of “visual tokens” a digital representation that the LLM (Large Language Model) can understand.

- Why it’s unique: Traditional vision encoders like ViT (Vision Transformer) can create a huge number of tokens from a high-resolution image, which bogs down the entire system. FastViT-HD uses a multi-stage approach to efficiently downsample the image, generating up to 4x fewer tokens than its predecessors. This drastically reduces the workload for the LLM.

2. Streamlined Model Design

Unlike other models that use complex techniques like token pruning or merging to reduce latency, FastVLM simplifies the process. It achieves its optimal performance simply by intelligently scaling the input image. This elegant design makes the model more reliable and easier to implement in real-world applications.

3. Integration with LLMs

FastVLM’s architecture is modular. The visual tokens generated by FastViT-HD are then passed to a language model (like Qwen2 or Vicuna) for processing. This allows the VLM to combine visual understanding with the LLM’s vast knowledge base to generate coherent and accurate responses. The efficient token generation of FastViT-HD dramatically reduces the Time-to-First-Token (TTFT), which is the time it takes for the AI to start generating its response.

This smart design is what enables FastVLM to be 85x faster than comparable models while being 3.4x smaller in size.

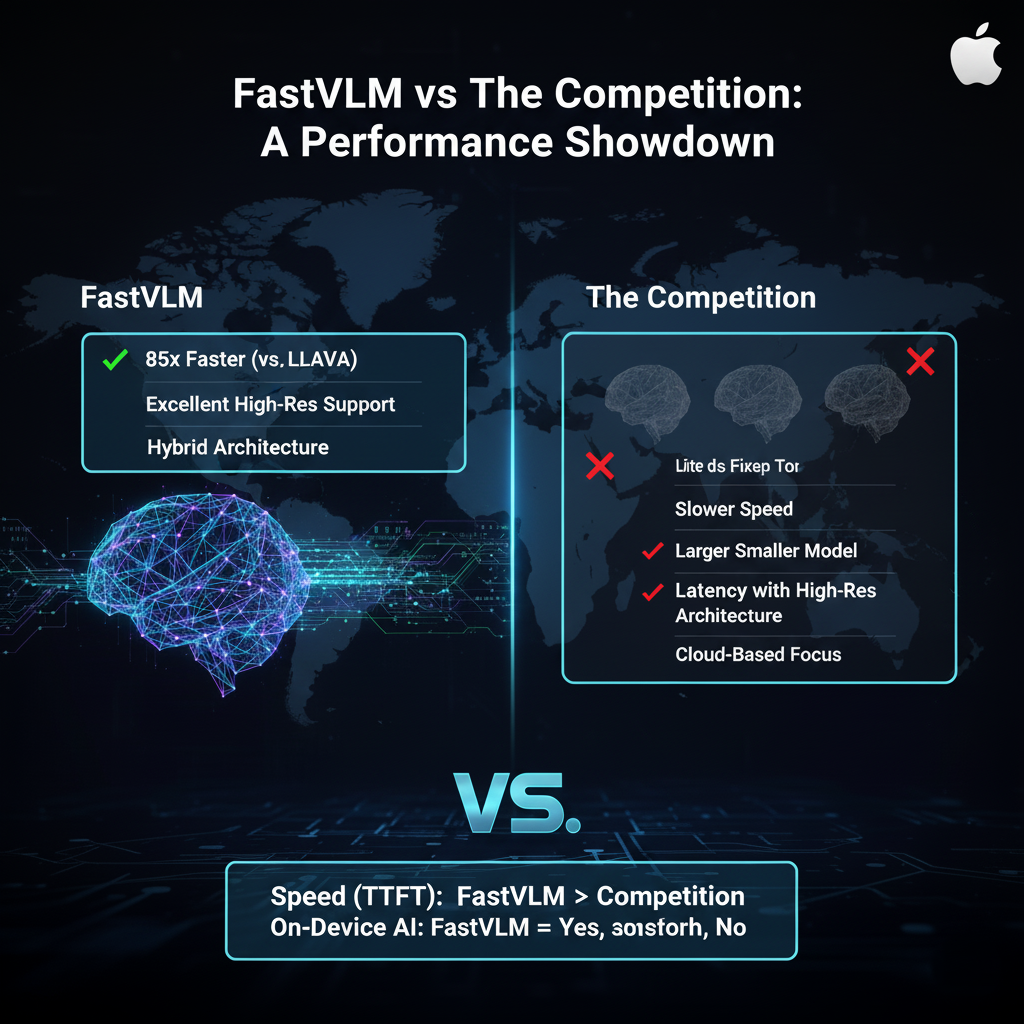

FastVLM vs. The Competition: A Performance Showdown

When FastVLM was introduced, it was instantly compared to other leading open-source VLMs. Here’s a brief comparison to highlight its strengths:

| Feature | FastVLM | LLaVA-OneVision | Cambrian-1 |

| Speed (TTFT) | 85x Faster (than LLaVA-OneVision) | Slower | Slower |

| Model Size | 3.4x Smaller (than LLaVA-OneVision’s vision encoder) | Larger | Larger |

| High-Res Support | Excellent | Struggles with latency | Struggles with latency |

| On-Device Focus | Primary design goal | Primarily cloud-based | Cloud-based |

| Architecture | Hybrid (Convolutional + Transformer) | Auto-regressive | Auto-regressive |

Export to Sheets

FastVLM’s performance on key benchmarks like SeedBench and MMMU is highly competitive, proving that its speed does not come at the expense of accuracy. Its superior accuracy-latency trade-off makes it a game-changer for on-device AI.

Real-World Applications of FastVLM

The potential applications of FastVLM are vast and exciting, particularly because it runs on-device.

- Accessibility: FastVLM can power real-time assistants for the visually impaired. It can describe on-screen content, read documents, or describe what’s happening around the user, all with instant feedback.

- Real-Time Video Captioning: Imagine your phone’s camera instantly describing a live video stream. This could be used for security monitoring, sports analysis, or even live blogging.

- Augmented Reality (AR): FastVLM can be used in AR applications to understand the real world. An AR app could identify objects, provide information about them, or even guide you with visual cues.

- Image and Document Analysis: It can scan receipts, forms, or ID cards in real-time, extracting key information without needing an internet connection. This ensures user privacy.

- Photography and Creative Apps: FastVLM could be integrated into camera apps to provide real-time suggestions, enhance photo editing, or even create new artistic effects based on what’s in the image.

The fact that FastVLM keeps all the processing on-device is a major win for user privacy. Your images and data never have to leave your phone or computer, which is a core part of Apple’s philosophy.

The Future of On-Device AI: What FastVLM Means

The release of FastVLM signals a clear and important trend in the AI industry. The future is not just about bigger models in the cloud; it’s about making AI smarter, faster, and more private on our own devices.

- Democratizing AI: By making powerful VLMs accessible on consumer hardware, Apple is empowering developers to build a new generation of smart, responsive, and privacy-focused applications.

- Hardware and Software Synergy: FastVLM is optimized for Apple Silicon and their MLX framework. This tight integration between hardware and software is what allows Apple to achieve such incredible efficiency.

- Privacy by Design: On-device AI is the ultimate solution for privacy. Users can benefit from powerful AI features without the fear of their sensitive data being sent to external servers.

- New Use Cases: The combination of speed and high-resolution capability opens up entirely new applications that were previously impossible. We could see future devices like smart glasses or AR headsets powered by a model like FastVLM, providing instant, real-time information about the world around you.

FastVLM is a bold move by Apple, and it sets a new standard for on-device AI. It proves that it’s possible to have both top-tier performance and user privacy, which will be a key factor in the AI race moving forward.

Conclusion

FastVLM by Apple is a monumental achievement in the field of AI. It is a powerful, efficient, and privacy-focused Vision Language Model that is a game-changer for on-device AI. Its innovative FastViT-HD architecture, combined with its ability to run on Apple Silicon, allows it to process high-resolution images with incredible speed and accuracy.

For developers, FastVLM is an open-source, robust, and highly optimized tool for building the next generation of real-time, privacy-conscious applications. For users, it means having access to powerful AI features that are faster, more secure, and always available, whether you have an internet connection or not.

FastVLM is a clear signal that the future of AI is not just in the cloud, but also in the palm of your hand. It’s a testament to how intelligent design can solve complex problems and bring the benefits of AI to everyone in a secure and efficient way.