Introduction

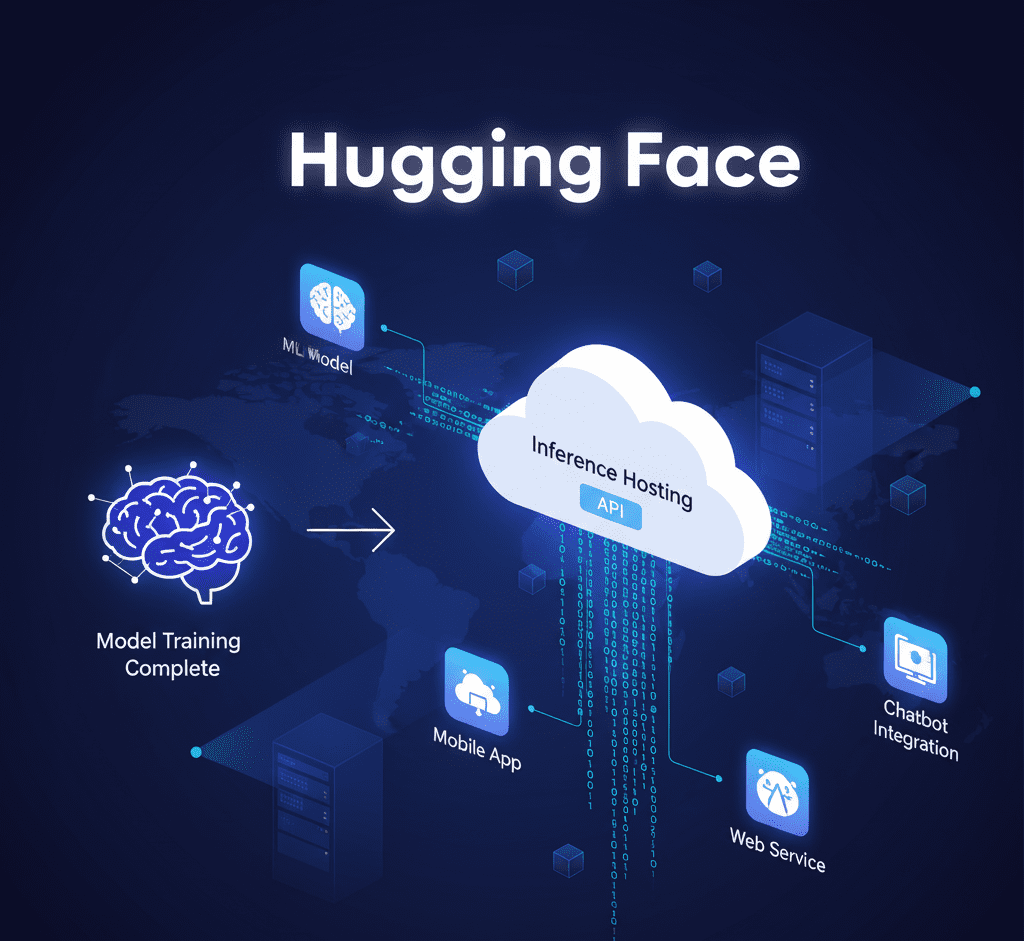

In today’s fast-growing world of artificial intelligence, machine learning (ML) models are no longer just for research labs. They have become powerful tools used in everyday applications, from smart chatbots and content generators to image recognition and speech-to-text services. However, a big challenge remains: how to take a trained ML model and make it accessible to everyone through a web or mobile app. This process, often called MLOps (Machine Learning Operations), is complex and requires specialized skills. It involves setting up servers, managing expensive GPUs, and ensuring your model can handle thousands of users at once.

This is where Hugging Face comes in. Hugging Face is a game-changer. It has built a unique ecosystem that not only lets you find and share state-of-the-art models but also provides simple, powerful ways to deploy them. This article will be your comprehensive guide to Hugging Face’s deployment solutions: the Inference APIs and Inference Endpoints. We will explain everything in simple, easy-to-understand language, so you can confidently use these tools for your own projects, whether you’re a student, a developer, or a business owner.

1. Understanding the Hugging Face Ecosystem

Hugging Face is much more than just a place to download models. It is a complete AI hub.

H2: The Core Components

- Hugging Face Hub: Think of this as the central library of AI. It contains over 400,000 models, thousands of datasets, and countless demo applications. It’s where the entire AI community collaborates.

- Libraries: Hugging Face has created popular open-source libraries like

transformers,diffusers, andaccelerate. These libraries make it easy to use and train complex models with just a few lines of code. - Inference Solutions: This is the part we will focus on. These are the tools that help you run your models in the cloud.

The main idea behind Hugging Face is to make powerful AI accessible and easy to use. It takes care of the hard parts of MLOps so you can focus on building great applications.

2. The Free Inference API: A Quick Start Guide

The Hugging Face Free Inference API is the simplest way to get started. It’s perfect for anyone who wants to test a model or build a small project without any cost or complex setup.

H3: What is It?

The Free Inference API is a service that lets you send a request to a public model on the Hugging Face Hub. In return, the API processes your request and sends back the result. You don’t need to worry about servers, GPUs, or code. Everything is handled for you.

H3: How to Use It (Step-by-Step)

- Find Your Model: Go to the Hugging Face Hub and find a model you want to use.

- Navigate to the Inference API: On the model’s page, you will see a small widget on the right. This widget is the Inference API. You can type your input there to test the model in real-time.

- Get the API Code: Just below the widget, there’s a section that provides a code snippet. You can copy this code and paste it into your own application. It’s available for Python, JavaScript, and Curl.

H3: Why It’s Great for Beginners

- Zero Cost: It’s completely free to use.

- Zero Setup: No need to install anything. You can start using it immediately.

- Easy to Learn: The API is very straightforward. It’s a great way to learn how to integrate AI models into your code.

H3: Limitations to Remember

The Free Inference API is excellent for learning, but it’s not for serious, production-level applications.

- Best-Effort Service: There are no guarantees on uptime or performance. The service might be slow or unavailable at times.

- Rate Limits: It has strict limits on how many requests you can make in a given time. If your app gets popular, it will stop working.

- Cold Starts: When you use a model for the first time, it takes a while to load. This delay, called a cold start, can be very frustrating for users.

3. Inference Endpoints: Your Professional Hosting Solution

For a reliable, high-performance, and scalable solution, you need to use Hugging Face Inference Endpoints. This is a paid, production-ready service that provides dedicated resources for your models.

H3: What are Inference Endpoints?

An Inference Endpoint is a private, dedicated instance of your model running on a powerful cloud server. Hugging Face manages everything, from the server setup to maintenance and security. Your model is always “on” and ready to respond quickly.

H3: Key Features for Professional Use

- Dedicated Performance: Your model runs on a dedicated GPU or CPU, so you get consistent, fast response times.

- Fully Managed Autoscaling: This is a key feature. When your website or app gets a lot of traffic, the endpoint automatically creates more copies of your model to handle the load. When the traffic goes down, it scales back, saving you money.

- Pay-Per-Second Billing: You only pay for the time your model is actively running. This is much more cost-effective than running a server 24/7.

- Security: Your endpoint is private and secure. You can use a private network to ensure your data stays safe.

H3: The Deployment Process (Simplified)

- Select Your Model: Go to the Hugging Face Hub and choose the model you want to deploy.

- Create an Endpoint: On the model’s page, go to the “Deploy” button and choose “Inference Endpoints.”

- Configure Your Setup: Here, you choose the cloud provider (like AWS or Azure), the server location, and the type of GPU or CPU you need. Hugging Face gives recommendations to make it easy.

- Deploy: Click “Create.” Hugging Face handles everything. Within a few minutes, your private endpoint will be ready.

4. Hugging Face vs. Traditional Cloud Hosting

Many people wonder why they should use Hugging Face when they can deploy a model on services like AWS SageMaker or Google Cloud Vertex AI. Here’s a simple comparison.

H3: Advantages of Hugging Face

- Ease of Use: Hugging Face is designed for simplicity. You don’t need to be an expert in cloud infrastructure. It’s a few clicks and your model is live.

- Cost-Effective: The pay-per-second model is often cheaper than managing your own servers, which can be complex and wasteful.

- Fast Deployment: With Inference Endpoints, you can go from a model on the Hub to a live API in a matter of minutes, not hours or days.

- Unified Ecosystem: Everything is in one place models, datasets, and deployment. This saves a lot of time and effort.

H3: When to Use Other Platforms

- Deep Customization: If you need complete control over every single detail of your server, software, and networking, a traditional cloud platform might be a better choice.

- Specific Integrations: If your project requires tight integration with other complex services within one cloud ecosystem, you might find it easier to use that provider’s tools.

For most developers and companies, Hugging Face offers a better balance of power and simplicity. It allows you to focus on building your product, not on managing servers.

5. Frequently Asked Questions (FAQs)

H3: What’s the difference between the Free API and Inference Endpoints?

The Free API is for testing and small projects, while Inference Endpoints are for commercial, high-traffic applications. Endpoints offer dedicated resources, better performance, and autoscaling, which the Free API does not have.

H3: How is the cost of an Inference Endpoint calculated?

You are billed per second for the time your model is active. For example, if your model is only used for a total of 10 minutes in a day, you will only be charged for those 10 minutes. This is why it’s cost-effective.

H3: Can I deploy a private model using an Inference Endpoint?

Yes, absolutely. You can deploy both public and private models from the Hugging Face Hub. Private models and their endpoints are completely secure.

H3: Is Hugging Face suitable for beginners?

Yes, it is one of the most beginner-friendly platforms. The documentation is excellent, and the simple workflow allows anyone to start using powerful AI without a lot of technical knowledge.

H3: What kind of models can I host on Hugging Face?

You can host a vast range of models, including those for:

- Text Generation (like GPT-2, Llama)

- Image Generation (like Stable Diffusion)

- Image Classification and Object Detection

- Speech-to-Text (like Whisper)

- And many more!

Conclusion

In conclusion, Hugging Face has revolutionized the field of AI by making the deployment process simple, efficient, and accessible. The platform’s Inference APIs and Inference Endpoints are powerful tools that solve the biggest challenges in MLOps. The Free Inference API is an excellent starting point for learning and prototyping, while the Inference Endpoints offer a robust, professional solution for any production-level application.

By providing a unified ecosystem of models, datasets, and deployment tools, Hugging Face empowers developers to build incredible AI-powered applications without getting bogged down in infrastructure. Whether you are a student exploring AI for the first time or a business looking to scale your services, Hugging Face provides the perfect set of tools to bring your ideas to life. It is not just a platform; it is the future of accessible and collaborative AI.