In the fast-paced world of generative AI, new models are released at a dizzying pace. While the headlines are often dominated by models that create stunning visuals, the true revolution lies in tools that give creators complete control. The ability to not just generate a video, but to direct it to control the camera movements, lighting, and composition was a challenge that most AI models struggled with.

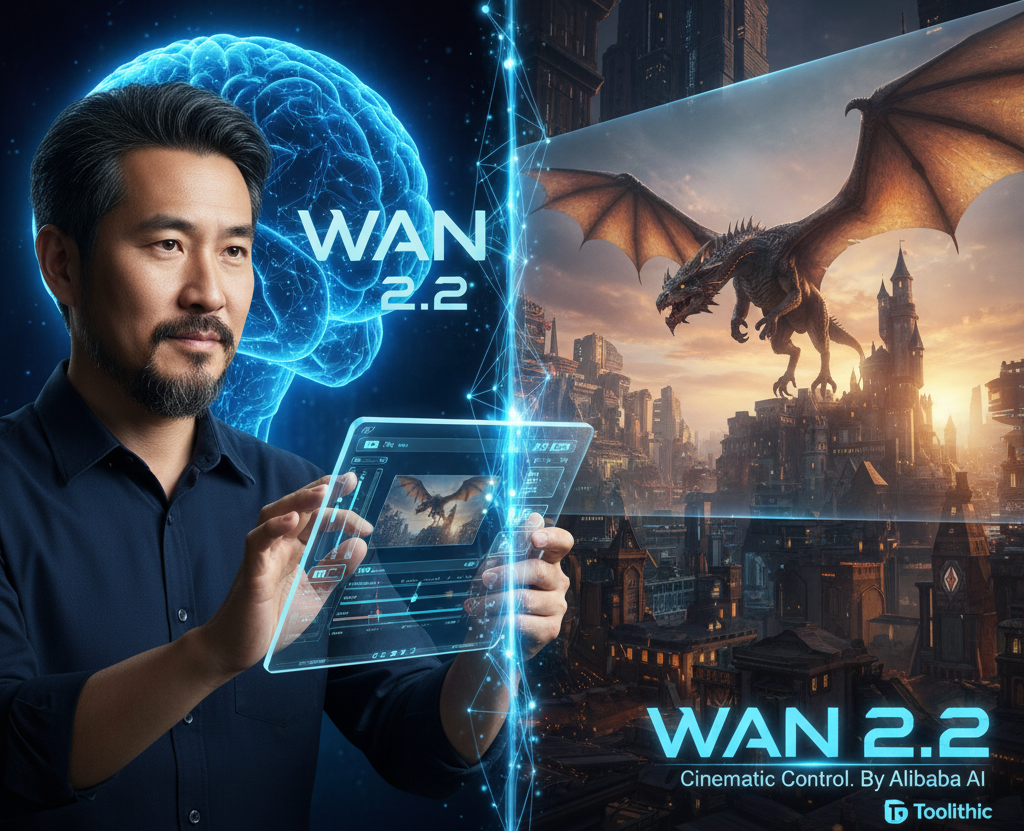

But all that has changed. Wan 2.2, a groundbreaking AI model from Alibaba, is completely revolutionizing the field of AI video generation. Unlike its rivals, Wan 2.2 is an AI that thinks like a director. It’s a foundational model that allows creators to generate cinematic-quality videos with precise camera control, from a simple text prompt.

This in-depth guide will take you on a deep dive into Wan 2.2. We will explore its innovative architecture, understand how it achieves superior artistic control, compare its performance with other leading models, and discuss the profound impact it is having on filmmaking, content creation, and the creative industry as a whole.

What is Wan 2.2? A Director-Grade AI for Video Creation

Wan 2.2 is a sophisticated AI image generator and AI video model designed to produce high-quality, cinematic visuals from a simple text prompt. Developed by Alibaba’s Wan AI team, it is an upgrade to the original Wan model, with a focus on providing creators with a high degree of control over style, composition, and temporal coherence.

At its core, Wan 2.2 is built on a powerful Diffusion Transformer (DiT) architecture, a modern approach to generative AI. This allows the model to understand complex prompts and generate a final image or video with an incredible level of detail and coherence. The primary purpose of Wan 2.2 is to be a versatile tool for visual creators, offering a single platform for both image and video generation.

Why Wan 2.2 is a Game-Changer

Wan 2.2 addresses several key limitations of previous AI video models:

- Superior Cinematic Control: Many models can generate videos, but they struggle to maintain a specific cinematic style. Wan 2.2 excels at this, allowing creators to specify camera movements (like pans and tilts), lighting styles (e.g., “moody sidelighting”), and aesthetic moods.

- Temporal Coherence: This is the Holy Grail of AI video generation. It refers to the AI’s ability to maintain the consistency of objects, characters, and scenes from one frame to the next. Wan 2.2 is highly effective at this, ensuring that a character’s appearance doesn’t change randomly and that objects remain in a consistent location.

- Complex Prompt Understanding: The model can understand long and complex prompts with multiple variables. For example, a prompt like “An epic, high-fantasy scene with a flying dragon casting a shadow over a medieval castle, with a dramatic sunset in the background” would be interpreted and rendered with stunning accuracy.

The Technology Under the Hood: The MoE Secret

The incredible performance of Wan 2.2 is the result of a sophisticated architectural design that is a significant departure from simpler models. It’s a testament to how specialized AI can solve specific, real-world problems. The main secret behind its power is the Mixture-of-Experts (MoE) architecture, which is a first for video generation models.

Think of it like a team of two expert artists working on a single project:

- High-Noise Expert: This expert handles the initial, “rough draft” stages of video generation. When the image is still mostly noise, this expert focuses on the overall scene layout, motion, and structural consistency.

- Low-Noise Expert: Once the rough draft is complete and the image is becoming clearer, the low-noise expert takes over. This expert refines the details, sharpens textures, polishes the lighting, and ensures every element looks perfect and consistent.

This two-expert system makes the model more efficient and powerful. While the total architecture has 27 billion parameters, only a portion is active at any given time, which means you get the quality of a huge model without the heavy computational cost.

How it Achieves Cinematic Control

Wan 2.2 was meticulously trained on a curated dataset that included detailed labels for cinematic elements like lighting, composition, contrast, and color tone. This allowed the model to learn the language of filmmaking. Now, a simple text prompt like “a low-angle shot with warm backlight” can be instantly understood and executed by the AI.

Wan 2.2 vs. The Competition: A Head-to-Head Comparison

The AI generative landscape is a battleground of giants. Here’s how Wan 2.2 measures up against its key competitors like Sora and Kling AI. We will focus on the unique strengths that set them apart.

| Feature | Wan 2.2 | Sora by OpenAI | Kling AI |

| Developer | Alibaba | OpenAI | Kuaishou (China) |

| Core Function | Cinematic Video Generation | Static, cinematic video generation | Long-form video generation |

| Key Advantage | Superior artistic control and prompt adherence. | Unmatched realism and complex scene understanding. | Longer video generation (up to 2 minutes) with realistic physics. |

| Technology | MoE Architecture | Proprietary Diffusion Transformer (DiT) | Diffusion Transformer (DiT) |

| Temporal Coherence | Excellent | Exceptional | Very good |

Export to Sheets

While Sora is still the king of raw cinematic realism and Kling AI is known for its ability to create longer videos, Wan 2.2 is carving out a niche as the go-to tool for creators who need precise control over the style and composition of their visuals. This is a significant advantage for filmmakers and advertisers.

Real-World Applications for Creators and Businesses

The capabilities of Wan 2.2 open up a world of possibilities for professionals and creators. Here are some of the ways it can be used to revolutionize the creative process:

- Filmmaking and Pre-visualization: A filmmaker can use this AI video model to quickly generate concept videos and storyboards with precise camera directions. This saves immense time and money on pre-production.

- Advertising and Marketing: A marketing agency can use Wan 2.2 to create a series of ad campaigns with a consistent artistic style, all from a single prompt. This allows them to iterate on ideas and test different visuals with unprecedented speed.

- Visual Content Creation: For social media, blogs, and websites, Wan 2.2 can be used to generate a unique visual identity. A content creator can use a single prompt to generate a series of images and videos that all share the same style, which is a massive time-saver.

- Dynamic Storytelling: Writers can use this AI image generator to bring their stories to life with cinematic flair. They can generate scenes with specific camera angles and lighting to convey a particular emotion or mood, which is something a simple text-to-image model cannot do.

For more information on other AI tools, you can also read our guide on [The Ultimate Guide to Kling AI] to see how its features compare to Wan 2.2’s focus on artistic control.

Conclusion: Wan 2.2 is a New Frontier for AI Creativity

Wan 2.2 by Alibaba is a monumental achievement in the field of AI. It is a powerful foundational model that is setting a new standard for AI-powered image and video generation. Its innovative Mixture-of-Experts architecture and specialization in artistic control make it a powerful tool for visual creators.

For creators, designers, and marketers, Wan 2.2 is a game-changer. It is a tool that not only enhances the visual quality of their work but also provides a level of control and precision that was previously impossible.

Wan 2.2 is a clear signal that the future of AI is not just about raw power, but about specialization and solving real-world creative problems. It is a tool that will empower creators to bring their ideas to life with a new level of confidence and artistic freedom. To learn more about this model, you can read the official announcement on the Alibaba Tongyi Lab blog.